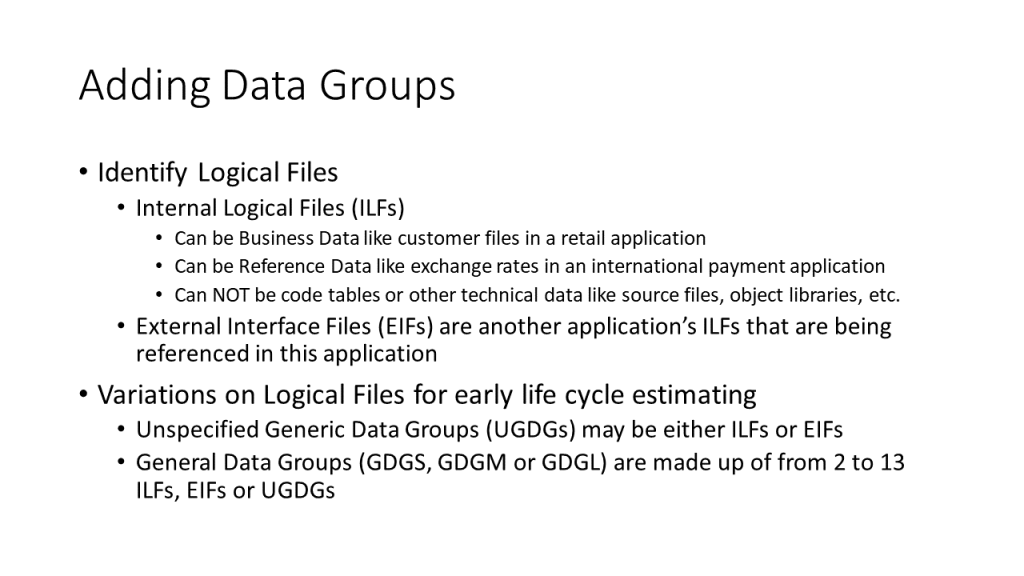

Niklaus Wirth developed the Pascal programming language. He also wrote a book titled “Algorithms + Data Structures = Programs.” There are things that could be said about the language and the book but the critical message at this time is that data plays an important part in an application. For estimating proposes, logical files + processes = applications. Logical files are either Internal Logical Files (ILFs) or External Interface Files (EIFs). The nouns in a user story are clues to the logical files. In the user story “As a Customer Service Representative, I can add customer information for a new customer,” customer information would be called out as a possible logical file. It is usually impossible to do a complete data analysis from the initial user stories. It would also add too much overhead to the estimating process Therefore, some higher level structures are used in estimating. Unspecified Generic Data Groups are used when it is not possible to decide whether a logical file is an ILF or an EIF. General Data Groups are used when it is too early to identify specific logical files but the estimator has reason to believe that there are clusters of logical files that can be identified.

Internal Logical Files (ILFs) are the groups of data that are maintained by the application. Historically, they correspond to master files. In days of old, a master file might consist of card images on a computer tape. For an order master file, there would be a record corresponding to each order. There might be separate record types for ship-to addresses and bill-to addresses. There would probably be a separate record type for each line in the order with the product, quantity, price and other descriptive information like color. In more modern implementations, this might be implemented in a relational data base with the order lines in a separate table because they are a repeating group. Even though there are different record types or tables, a user would think of this as a single logical file containing orders. When identifying ILFs, it is the user perspective that carries the day. Another example might be a human resources application with ILFs for Employees and Departments. An employee might be assigned to a department or perhaps assigned to multiple departments. This calls for an associative entity to tie these ILFs together. This would not be a third ILF if users did not think of this as a separate data group. However, if these assignments started to have significance in themselves, then it would be an additional ILF. This would happen if the start and end dates of the assignments were added, as well as some type of appraisal information.

The function point weight of an ILF is determined by the number of Record Element Types (RETs) as well as the number of Date Element Types (DETs) that it contains. Usually, these are unknown until a sprint is well under way. Roberto Meli analyzed a corpus of function point counts and came to the conclusion that the most likely size of an ILF was 7.7 function points. This should be used for estimating purposes.

The examples given above involve business data. When running a business it is common to gain new customers, acquire new employees, transact business with the customers and pay the employees. Most applications deal with only a subset of these situations. However, if there was a customer ILF, then we would expect to have user stories about how to add new customers. If these are missing, then an estimator should check to see why. If the user stories are actually missing, the functionality of the application will be understated unless they are added. If the data groups are being maintained by another application, then they are External Interface Files (EIFs), not ILFs. EIFs are discussed below.

Some ILFs consist of reference data. Reference data is usually indicated by a user story like, “As an administrator, I can maintain a list of fee multipliers for each country that we do business in.” This might be used by a consulting company. In the United States, a daily consulting fee might be charged. In Mexico and Canada, that same fee might apply. Their multipliers would be 1. However, that multiplier might be 1.5 in most countries in South America. It might be larger in some. The relationship is not commutative. We know Mexico has a value of 1 but a fee multiplier of 1 would apply to the United States, Canada or Mexico. Knowing the fee multiplier is not enough to identify the country involved. There are many possible examples of this type of ILF. A table that specifies costs for different shipping zones might also be one. Sometimes, it is not clear whether the ILF is business data or reference data. It does not matter. Both types of ILF have the same function point weight. Estimating this as 7.7 function points makes the most sense.

There are files that are not functional in nature and therefore do not have any function points associated with them. One of these is a code table. A typical example is the table of abbreviations that are used by the travel industry to correspond to airports. For example EWR corresponds to Newark International Airport. Unlike the reference ILF above, there is a one-to-one correspondence between the code and the value. Theoretically, a user should be able to enter either one. In practice, if someone had to enter the entire name, it would frequently be inconsistent. One person would enter Newark Airport and another would enter Newark International Airport. There is no added functionality. This is a technical solution to facilitate inputs within the application. Code tables are not logical files. However, they are considered when counting SNAP points for estimating changes.

There are other files that are not counted with the data groups. The source files that contain the programs that implement the application are not considered. The same is true of object files. Many applications have files that contain settings for various processes. Unless you are developing a compiler or similar application, none of these are considered as part of the functionality. For compilers, the source and object codes are considered to be inputs and outputs.

External Interface Files (EIFs) are other application’s ILFs that are being referenced in this application. As was stated before, most of the nouns in a user story refer to data groups. If the data groups are maintained in the application, then they are ILFs. If they are maintained in another application and simply referenced in this one, then they are EIFs. This must be evaluated functionally. If a copy of an ILF from another application is made to this one, then it is still an EIF. The transactions used to duplicate the logical file are not counted as part of the functionality being estimated. Like ILFs, the functional weight of an EIF is a function of the number of Record Element Types (RETs) and Date Element Types (DETs) being referenced. Just as in the case of the ILFs, this information is usually unknowable before the iteration where the development is being done. Therefore, it is best to use the value of 5.4 function points that was established by Roberto Meli’s research.

When software is developed using the waterfall methodology, all of the analysis is done early in the life cycle. ILFs and EIFs are identified before any design or coding is done. When using agile development methodologies, analysis, design and coding take place concurrently. By the time any data modeling has been done, the code is already being developed to access it. It is too late to use that information for estimating purposes. There are two other data constructs that can be used before logical entities can be accurately identified. These constructs are Unspecified Generic Data Groups (UGDGs) and General Data Groups. Both of these are described below. Identifying data groups at that level is sufficient for estimating new development. However, that only works for the first iteration of an agile development and the added functionality in subsequent iterations. In order to estimate the impact of changes to a data group, it is necessary to have identified the data groups down to the ILF or EIF level. This is not a problem. Once the team has implemented a data group, they will have an understanding of the data down to the level of ILFs and EIFs.

Unspecified Generic Data Groups (UGDGs) are used when a logical file is identified, but it is not clear whether it is an ILF or an EIF. This is common when working with initial user stories. Some data will be needed, but it will not be clear whether this information must be maintained by the application or whether it can be read from another one. EIFs are usually files that can be directly accessed by a key. It is often data that is accessed by using a program language interface. If it is found to be an ILF, then transactions will be necessary to maintain it. This will add to the application, but might come into play in a later iteration. Here is a possible situation. Initially, it appears that some data may be read from another application. You estimate and begin the iteration based on this assumption. As you work with this data, you realize that it is not reliable. You will need to maintain a clean version of the data. You would probably complete the iteration with the sub-optimal data and then turn the UGDG into an ILF with the necessary maintenance transactions during a later iteration. Estimate each UGDG as 7.1 function points.

General Data Groups are another construction that can be used when ILFs and EIFs cannot be identified completely. They come in three sizes:

- Small (GDGS) contains 2-4 ILFs, EIFs or UGDGs and has an expected size of 21.4 function points

- Medium (GDSM) contains 5-8 ILFs, EIFs or UGDGs and has an expected size of 46.3 function points

- Large (GDSL) contains 9-13 ILFs, EIFs or UGDGs and has an expected size of 78.3 function points

General Data Groups are used when the estimator suspects that there are relationships in a data group that will eventually cause them to be divided into several ILFs or EIFs. In the example with the Employee data group, the estimator might anticipate that an Assignment ILF will eventually be required. The Employee data would then be considered a GDGS instead of an ILF. A similar situation frequently occurs with EIFs. The application being estimated may require 10 pieces of data from another application. This may require that one ILF be accessed in that application, 10 ILFs or any number between them. This would mean there would be from 1 to 10 EIFs in the application being estimated. Estimating this as a GDSM might be reasonable until more is known.

There is a rule of thumb that is worth considering. In most IT applications, there tends to be a 70%/30% split between transaction functionality and data functionality. Transaction functions include EIs, EOs, EQs and any of the estimating generalizations such as UGOs and UGPs. Data functions are the ones discussed above. This is only a rule of thumb. If you have deviated a little, like 60%/40%, it is not worth worrying about. If you have deviated a lot, then you are probably still right if you understand why your application is unusual.

Leave a Reply